Encrypted Image Filtering Using Homomorphic Encryption

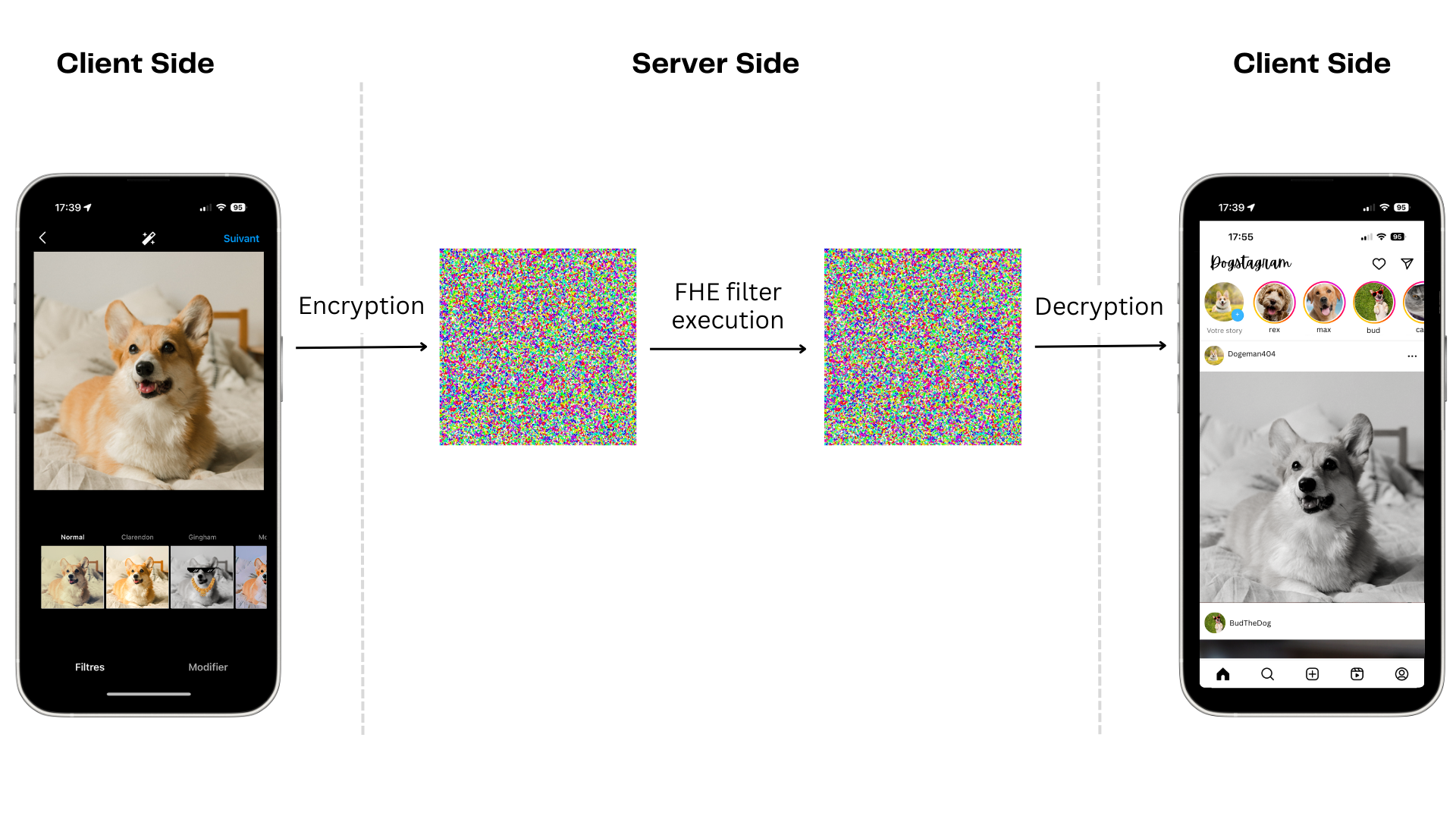

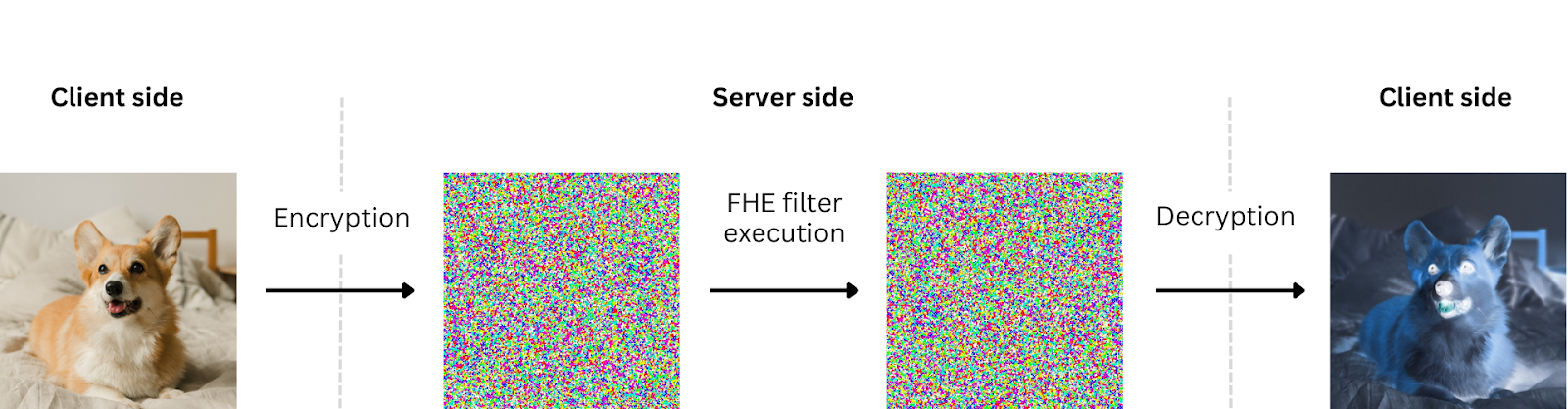

Zama has created a new Hugging Face space to apply filters over images homomorphically using the developer-friendly tools in Concrete-Numpy and Concrete-ML. This means the data is encrypted both in transit and during processing.

Concrete-Numpy is a Python library that allows computation directly on encrypted data without needing to decrypt it first. The Concrete-Numpy API makes converting regular Python functions to their FHE-equivalent circuit and then deploying them within a Client-Server interface user-friendly. Like Concrete-Numpy, Concrete-ML gives you the ability to use machine learning models with FHE settings without any prior knowledge of cryptography.

Here, you’ll use the utility functions in Concrete-ML to build an image processing filter using Torch models. This has the potential advantage of adding machine learning-related filters, such as image enhancement or noise reduction.

Installation

Create and activate a virtual environment using Python 3.8.15.

Install the libraries: Concrete-ML 0.6.1 which comes with Concrete-Numpy 0.9.0.

Filters

Using a simple Torch module offers a convenient way of building filters, mainly through the use of convolution operators. You can also use an interface for integrating additional image processing computations built through machine learning models.

Convolution is an important operator because the most used filters (blurring, sharpening) are built using kernels of 2 dimensions. Torch’s convolution does not follow the same shape conventions as usually found in Numpy arrays, so some reshape functions are added before and after the operator. These functions will be executed in FHE along the convolution.

Build a filter Torch model using a 2D kernel:

Create a sharpen filter by defining the kernel:

Images and kernels should only be made of integers because the FHE implementation used in Concrete-Numpy currently only supports this type.

Simpler cases, such as inverting the colors of an RGB image, can be completed as shown below. The Hugging Face Space demo has more examples.

These computations are considered when executing in FHE. Input or output images also require some post-processing in the clear. Output values, for instance, need to be clipped to proper RGB standards as filters don't handle such constraints.

Compiling

Once the filters are defined, compile them to their equivalent FHE circuit in order to apply them on encrypted images. Concrete-ML provides tools that allow any Torch model to be compiled in Concrete-Numpy.

First, import the tools:

The compiler only considers static shapes, so this tutorial uses RGB images of shape (100, 100, 3). If this shape has to change, the filters need to be compiled again.

The compilation process considers a representative set of inputs (see the documentation). The images found in the set indicate to the compiler some representative ranges of values needed for computing cryptographic parameters.

This input set is randomly generated by creating synthetic RGB images composed of random integers (3 channels, with integers from 0 to 255). It is big enough to ensure the images are made of different ranges. Another option would be to compose the input set of several existing images, as long as they are all equal in size.

Compile the `sharpen_filter`:

Client-Server

Concrete-Numpy offers tools for creating a Client-Server interface using FHE circuits, including the ability to save and load serialized client and server instances as well as encrypt inputs, execute a FHE circuit, and decrypt outputs.

You can then build a simple Client-Server interface that handles these filters using Concrete-Numpy’s API.

Development interface.

Save the necessary files for developing the interface:

Load the client and server interfaces:

Client interface

There are two main steps in the client interface: encrypting the inputs and decrypting the outputs. As with the filter, some preprocessing is required before encryption. Then, these encrypted images need to be serialized to be sent to or received from the server.

Pre-process, encrypt, and serialize an input image before sending it to the server:

Deserialize, decrypt, and post-process an output received from the server:

Generate the keys and retrieve the serialized evaluation before sending it to the server:

Server interface.

In order to execute the filter in FHE, the server needs to load the FHE circuit and run it over the encrypted input image using the evaluation key. For the Client interface, Concrete-Numpy’s API lets you easily serialize and deserialize these objects.

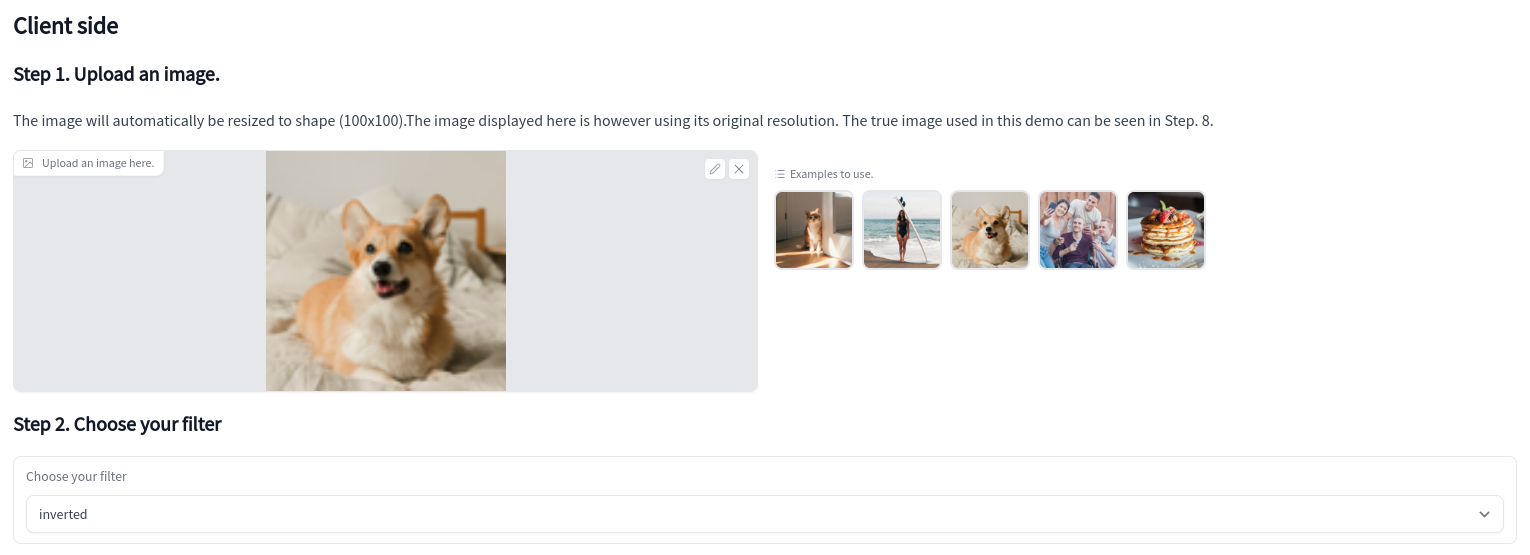

Demo

Check out the Hugging Face space to see the above steps in action. You can upload an image and then pick a filter. Uploaded images are automatically cropped and resized to a (100, 100) shape under the hood.

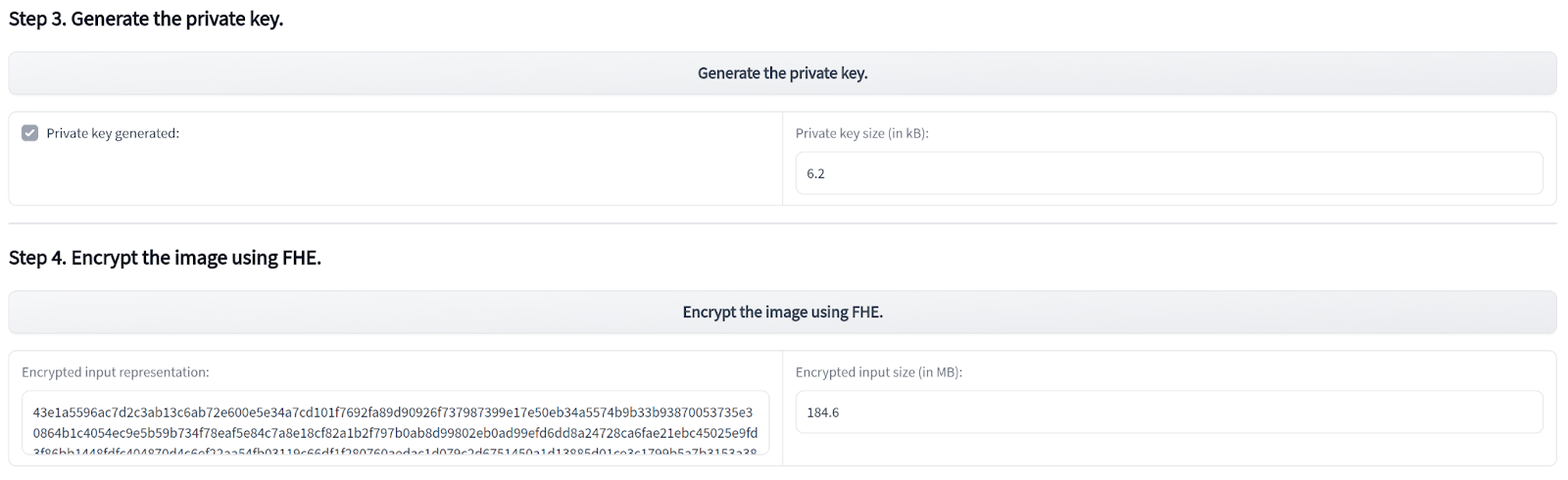

You then generate the keys and encrypt the image.

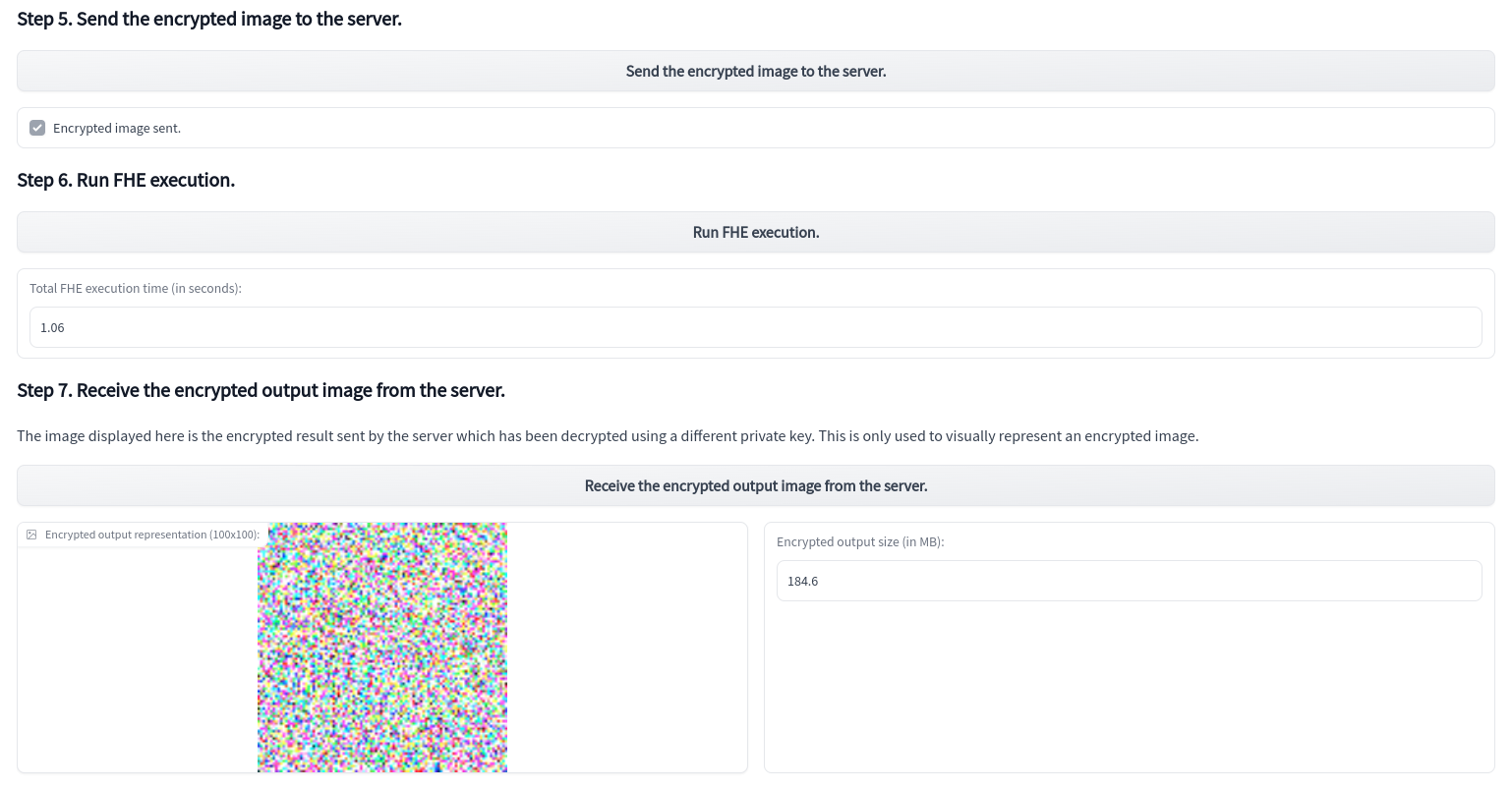

Next, send the encrypted image to the server, executing the chosen filter in FHE, and receive the encrypted output. The server does not have access to the input image nor to the output result. Everything is encrypted from start to finish. The execution time does not exceed a few seconds per image using a machine with 8 vCPUs.

The demo displays the encrypted output’s representation. This is simulated by generating a random private key and using it to decrypt the output. As the real key would be unknown to an attacker, nothing more than an image with random pixels can be seen. The legitimate user, however, retains access.

Currently, both the client and server run on the same machine for technical reasons, independent from the framework. In the future, other demos on separated machines will be built.

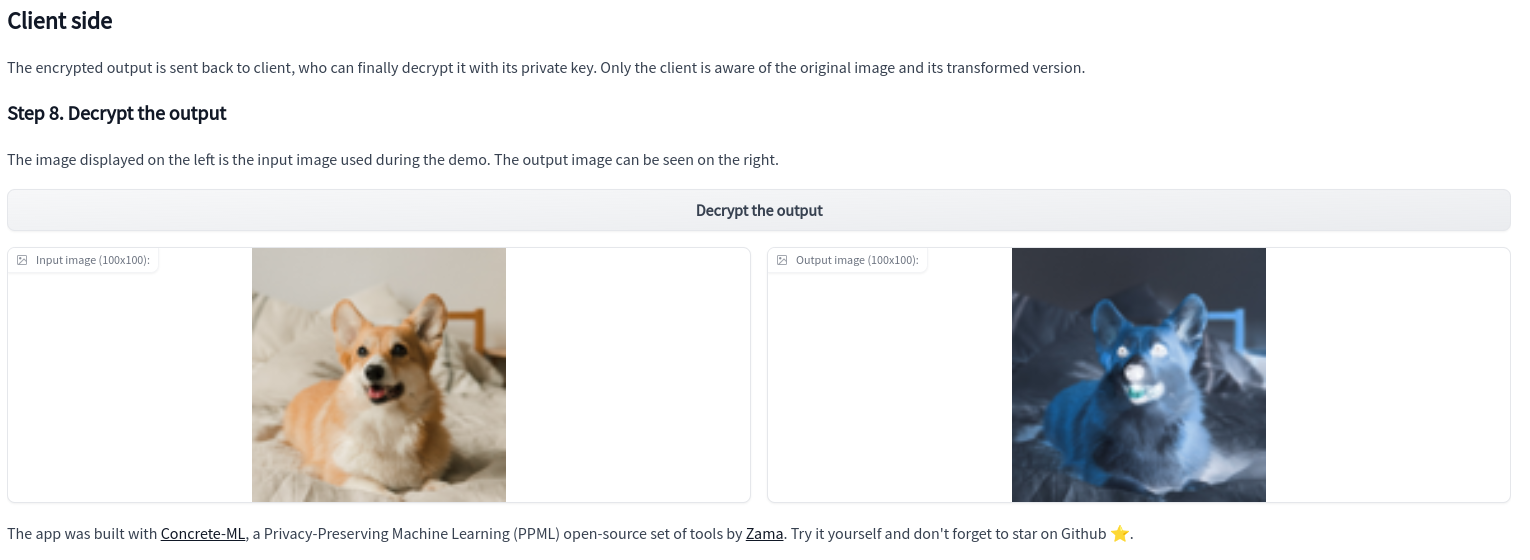

Finally, decrypt the output and compare it to the original image:

Conclusion

This Hugging Face space allows anyone to interact with the fundamental principles of FHE as applied to image processing. Zama first introduced encrypted image filtering as dummy code in the 6-minute introduction to homomorphic encryption. Now, in real time, you see how to build common image processing filters using Torch models. With the Concrete-Numpy library and Concrete-ML, you can easily convert these models into their equivalent FHE circuit and then deploy the circuit with a Client-Server interface. This interface could be used to create a service that lets users apply any image filter while keeping the image private with respect to the server. It could also be possible to create filters based on machine learning models, such as image enhancement or noise reduction.

- ⭐️ Like our work? Support us and star the Github repo.

- 👋 Questions? Join the Zama community.

- 💸 Join the Zama Bounty Program. Solve problems, write tutorials and earn rewards, more than €500,000 in prizes.

.svg)

.png)

.jpg)